OAK相机如何将 YOLO NAS 模型转换成blob格式?

| 1.其他Yolo转换及使用教程请参考 |

▌.pt 转换为 .onnx

使用下列脚本将 YOLO NAS 模型转换为 onnx 模型,若已安装 openvino_dev,则可进一步转换为 OpenVINO 模型:

安装依赖:

pip install super_gradients示例用法:

python export_yolo_nas.py -m yolo_nas_s -imgsz 640 usage: export_yolo_nas.py [-h] [-m {yolo_nas_s,yolo_nas_m,yolo_nas_l}] [-imgsz IMG_SIZE [IMG_SIZE ...]]

[-op OPSET] [-n NAME] [-o OUTPUT_DIR] [-b] [-s] [-sh SHAVES]

[-t {docker,blobconverter,local}]

Tool for converting YOLO NAS models to the blob format used by OAK

optional arguments:

-h, --help show this help message and exit

-m {yolo_nas_s,yolo_nas_m,yolo_nas_l}, -i {yolo_nas_s,yolo_nas_m,yolo_nas_l}, -w {yolo_nas_s,yolo_nas_m,yolo_nas_l}, --input_model {yolo_nas_s,yolo_nas_m,yolo_nas_l}

model name (default: yolo_nas_s)

-imgsz IMG_SIZE [IMG_SIZE ...], --img-size IMG_SIZE [IMG_SIZE ...]

image size (default: [640, 640])

-op OPSET, --opset OPSET

opset version (default: 12)

-n NAME, --name NAME The name of the model to be saved, none means using the same name as the input

model (default: None)

-o OUTPUT_DIR, --output_dir OUTPUT_DIR

Directory for saving files, none means using the same path as the input model

(default: None)

-b, --blob OAK Blob export (default: False)

-s, --spatial_detection

Inference with depth information (default: False)

-sh SHAVES, --shaves SHAVES

Inference with depth information (default: None)

-t {docker,blobconverter,local}, --convert_tool {docker,blobconverter,local}

Which tool is used to convert, docker: should already have docker

(https://docs.docker.com/get-docker/) and docker-py (pip install docker)

installed; blobconverter: uses an online server to convert the model and should

already have blobconverter (pip install blobconverter); local: use openvino-dev

(pip install openvino-dev) and openvino 2022.1 (

https://docs.oakchina.cn/en/latest

/pages/Advanced/Neural_networks/local_convert_openvino.html#id2) to convert

(default: blobconverter)

export_yolo_nas.py :

#!/usr/bin/env python3

# -*- coding:utf-8 -*-

import argparse

import json

import time

import warnings

from io import BytesIO

from pathlib import Path

import torch

import torch.nn as nn

warnings.filterwarnings("ignore")

ROOT = Path(__file__).resolve().parent

yolo_nas = [

"yolo_nas_s",

"yolo_nas_m",

"yolo_nas_l",

]

class DetectNAS(nn.Module):

"""YOLO-NAS Detect head for detection models"""

def __init__(self, old_detect):

super().__init__()

self.num_classes = old_detect.num_classes # number of classes

self.reg_max = old_detect.reg_max

self.num_heads = old_detect.num_heads

self.proj_conv = old_detect.proj_conv

for i in range(self.num_heads):

setattr(self, f"head{i + 1}", getattr(old_detect, f"head{i + 1}"))

def forward(self, feats):

output = []

for i, feat in enumerate(feats):

b, _, h, w = feat.shape

height_mul_width = h * w

reg_distri, cls_logit = getattr(self, f"head{i + 1}")(feat)

reg_dist_reduced = torch.permute(reg_distri.reshape([-1, 4, self.reg_max + 1, height_mul_width]), [0, 2, 3, 1])

reg_dist_reduced = nn.functional.conv2d(nn.functional.softmax(reg_dist_reduced, dim=1), weight=self.proj_conv).squeeze(1)

# cls and reg

pred_scores = cls_logit.sigmoid()

pred_conf, _ = pred_scores.max(1, keepdim=True)

pred_bboxes = torch.permute(reg_dist_reduced, [0, 2, 1])

output.append(torch.cat([pred_bboxes.reshape([-1, 4, h, w]), pred_conf, pred_scores], dim=1))

return output

def parse_args():

parser = argparse.ArgumentParser(

description="Tool for converting Yolov8 models to the blob format used by OAK",

formatter_class=argparse.ArgumentDefaultsHelpFormatter,

)

parser.add_argument(

"-m",

"-i",

"-w",

"--input_model",

type=str,

help="model name ",

default="yolo_nas_s",

choices=yolo_nas,

)

parser.add_argument(

"-imgsz",

"--img-size",

nargs="+",

type=int,

default=[640, 640],

help="image size",

) # height, width

parser.add_argument("-op", "--opset", type=int, default=12, help="opset version")

parser.add_argument(

"-n",

"--name",

type=str,

help="The name of the model to be saved, none means using the same name as the input model",

)

parser.add_argument(

"-o",

"--output_dir",

type=Path,

help="Directory for saving files, none means using the same path as the input model",

)

parser.add_argument(

"-b",

"--blob",

action="store_true",

help="OAK Blob export",

)

parser.add_argument(

"-s",

"--spatial_detection",

action="store_true",

help="Inference with depth information",

)

parser.add_argument(

"-sh",

"--shaves",

type=int,

help="Inference with depth information",

)

parser.add_argument(

"-t",

"--convert_tool",

type=str,

help="Which tool is used to convert, docker: should already have docker (https://docs.docker.com/get-docker/) and docker-py (pip install docker) installed; blobconverter: uses an online server to convert the model and should already have blobconverter (pip install blobconverter); local: use openvino-dev (pip install openvino-dev) and openvino 2022.1 ( https://docs.oakchina.cn/en/latest /pages/Advanced/Neural_networks/local_convert_openvino.html#id2) to convert",

default="blobconverter",

choices=["docker", "blobconverter", "local"],

)

parse_arg = parser.parse_args()

if parse_arg.name is None:

parse_arg.name = parse_arg.input_model

if parse_arg.output_dir is None:

parse_arg.output_dir = ROOT.joinpath(parse_arg.input_model)

parse_arg.output_dir = parse_arg.output_dir.resolve().absolute()

parse_arg.output_dir.mkdir(parents=True, exist_ok=True)

parse_arg.img_size *= 2 if len(parse_arg.img_size) == 1 else 1 # expand

if parse_arg.shaves is None:

parse_arg.shaves = 5 if parse_arg.spatial_detection else 6

return parse_arg

def export(input_model, img_size, output_model, opset, **kwargs):

t = time.time()

from super_gradients.training import models

# Load PyTorch model

model = models.get("yolo_nas_s", pretrained_weights="coco")

labels = model._class_names # get class names

labels = labels if isinstance(labels, list) else list(labels.values())

# check num classes and labels

assert model.num_classes == len(labels), f"Model class count {model.num_classes} != len(names) {len(labels)}"

# Replace with the custom Detection Head

model.heads = DetectNAS(model.heads)

num_branches = model.heads.num_heads

# Input

img = torch.zeros(1, 3, *img_size)

model.eval()

model.prep_model_for_conversion(input_size=[1, 3, *img_size])

y = model(img) # dry runs

# ONNX export

try:

import onnx

print()

print("Starting ONNX export with onnx %s..." % onnx.__version__)

output_list = ["output%s_yolov6r2" % (i + 1) for i in range(num_branches)]

with BytesIO() as f:

torch.onnx.export(

model,

img,

f,

verbose=False,

opset_version=opset,

input_names=["images"],

output_names=output_list,

)

# Checks

onnx_model = onnx.load_from_string(f.getvalue()) # load onnx model

onnx.checker.check_model(onnx_model) # check onnx model

try:

import onnxsim

print("Starting to simplify ONNX...")

onnx_model, check = onnxsim.simplify(onnx_model)

assert check, "assert check failed"

except ImportError:

print(

"onnxsim is not found, if you want to simplify the onnx, "

+ "you should install it:\n\t"

+ "pip install -U onnxsim onnxruntime\n"

+ "then use:\n\t"

+ f'python -m onnxsim "{output_model}" "{output_model}"'

)

except Exception:

print("Simplifier failure")

onnx.save(onnx_model, output_model)

print("ONNX export success, saved as:\n\t%s" % output_model)

except Exception:

print("ONNX export failure")

# generate anchors and sides

anchors = []

# generate masks

masks = dict()

print("anchors:\n\t%s" % anchors)

print("anchor_masks:\n\t%s" % masks)

export_json = output_model.with_suffix(".json")

export_json.write_text(

json.dumps(

{

"nn_config": {

"output_format": "detection",

"NN_family": "YOLO",

"input_size": f"{img_size[0]}x{img_size[1]}",

"NN_specific_metadata": {

"classes": model.num_classes,

"coordinates": 4,

"anchors": anchors,

"anchor_masks": masks,

"iou_threshold": 0.3,

"confidence_threshold": 0.5,

},

},

"mappings": {"labels": labels},

},

indent=4,

)

)

print("Anchors data export success, saved as:\n\t%s" % export_json)

# Finish

print("Export complete (%.2fs).\n" % (time.time() - t))

def convert(convert_tool, output_model, shaves, output_dir, name, **kwargs):

t = time.time()

export_dir: Path = output_dir.joinpath(name + "_openvino")

export_dir.mkdir(parents=True, exist_ok=True)

export_xml = export_dir.joinpath(name + ".xml")

export_blob = export_dir.joinpath(name + ".blob")

if convert_tool == "blobconverter":

from zipfile import ZIP_LZMA, ZipFile

import blobconverter

blob_path = blobconverter.from_onnx(

model=str(output_model),

data_type="FP16",

shaves=shaves,

use_cache=False,

version="2022.1",

output_dir=export_dir,

optimizer_params=[

"--scale=255",

"--reverse_input_channel",

"--use_new_frontend",

],

download_ir=True,

)

with ZipFile(blob_path, "r", ZIP_LZMA) as zip_obj:

for name in zip_obj.namelist():

zip_obj.extract(

name,

output_dir,

)

blob_path.unlink()

elif convert_tool == "docker":

import docker

export_dir_in_docker = Path("/io").joinpath(export_dir.name)

export_xml_in_docker = export_dir_in_docker.joinpath(name + ".xml")

export_blob_in_docker = export_dir_in_docker.joinpath(name + ".blob")

client = docker.from_env()

image = client.images.pull("openvino/ubuntu20_dev", tag="2022.1.0")

docker_output = client.containers.run(

image=image.tags[0],

command=f'bash -c "mo -m {name}.onnx -n {name} -o {export_dir_in_docker} '

+ "--static_shape --reverse_input_channels --scale=255 --use_new_frontend "

+ "&& echo 'MYRIAD_ENABLE_MX_BOOT NO' | tee /tmp/myriad.conf >> /dev/null "

+ "&& /opt/intel/openvino/tools/compile_tool/compile_tool -m "

+ f"{export_xml_in_docker} -o {export_blob_in_docker} -ip U8 -VPU_NUMBER_OF_SHAVES {shaves} "

+ f'-VPU_NUMBER_OF_CMX_SLICES {shaves} -d MYRIAD -c /tmp/myriad.conf"',

remove=True,

volumes=[

f"{output_dir}:/io",

],

working_dir="/io",

)

print(docker_output.decode("utf8"))

else:

import subprocess as sp

# OpenVINO export

print("Starting to export OpenVINO...")

OpenVINO_cmd = "mo --input_model %s --output_dir %s --data_type FP16 --scale=255 --reverse_input_channel" % (output_model, export_dir)

try:

sp.check_output(OpenVINO_cmd, shell=True)

print("OpenVINO export success, saved as %s" % export_dir)

except sp.CalledProcessError:

print("")

print("OpenVINO export failure!")

print("By the way, you can try to export OpenVINO use:\n\t%s" % OpenVINO_cmd)

# OAK Blob export

print("Then you can try to export blob use:")

blob_cmd = (

"echo 'MYRIAD_ENABLE_MX_BOOT ON' | tee /tmp/myriad.conf"

+ "compile_tool -m %s -o %s -ip U8 -d MYRIAD -VPU_NUMBER_OF_SHAVES %s -VPU_NUMBER_OF_CMX_SLICES %s -c /tmp/myriad.conf"

% (export_xml, export_blob, shaves, shaves)

)

print("%s" % blob_cmd)

print("compile_tool maybe in the path: /opt/intel/openvino/tools/compile_tool/compile_tool, if you install openvino 2022.1 with apt")

print("Blob file saved as:\n\t%s" % export_blob)

print("Convert complete (%.2fs).\n" % (time.time() - t))

if __name__ == "__main__":

args = parse_args()

print(args)

output_model = args.output_dir / (args.name + ".onnx")

export(output_model=output_model, **vars(args))

if args.blob:

convert(output_model=output_model, **vars(args))

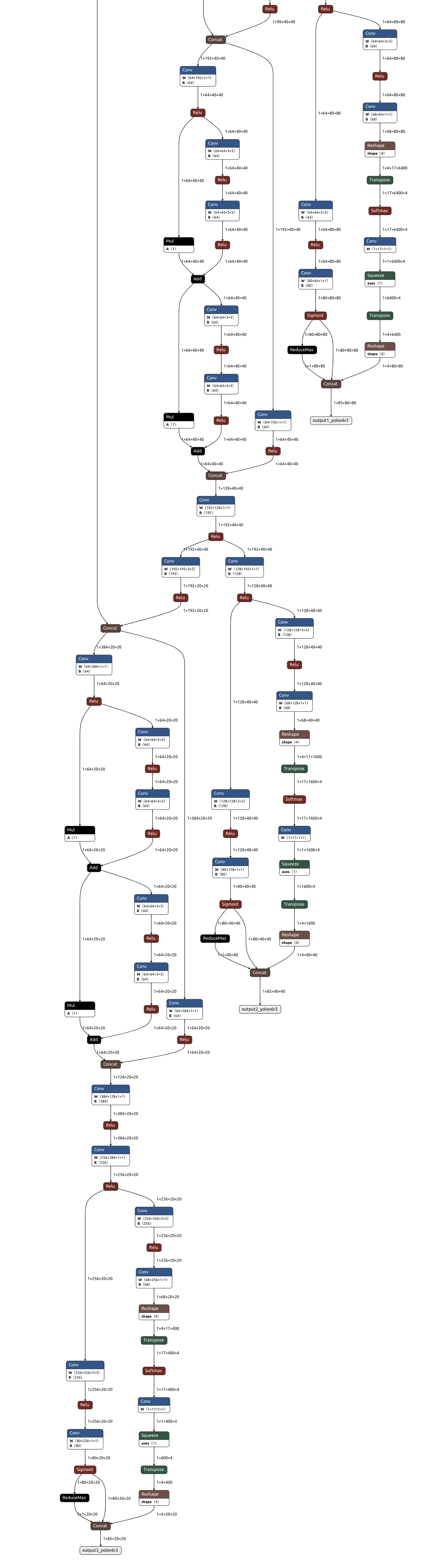

可以使用 Netron 查看模型结构:

▌转换

openvino 本地转换

onnx -> openvino

mo 是 openvino_dev 2022.1 中脚本,

安装命令为

pip install openvino-dev

mo --input_model yolo_nas_s.onnx --scale=255 --reverse_input_channelopenvino -> blob

compile_tool 是 OpenVINO Runtime 中脚本,

<path>/compile_tool -m yolo_nas_s.xml \

-ip U8 -d MYRIAD \

-VPU_NUMBER_OF_SHAVES 6 \

-VPU_NUMBER_OF_CMX_SLICES 6在线转换

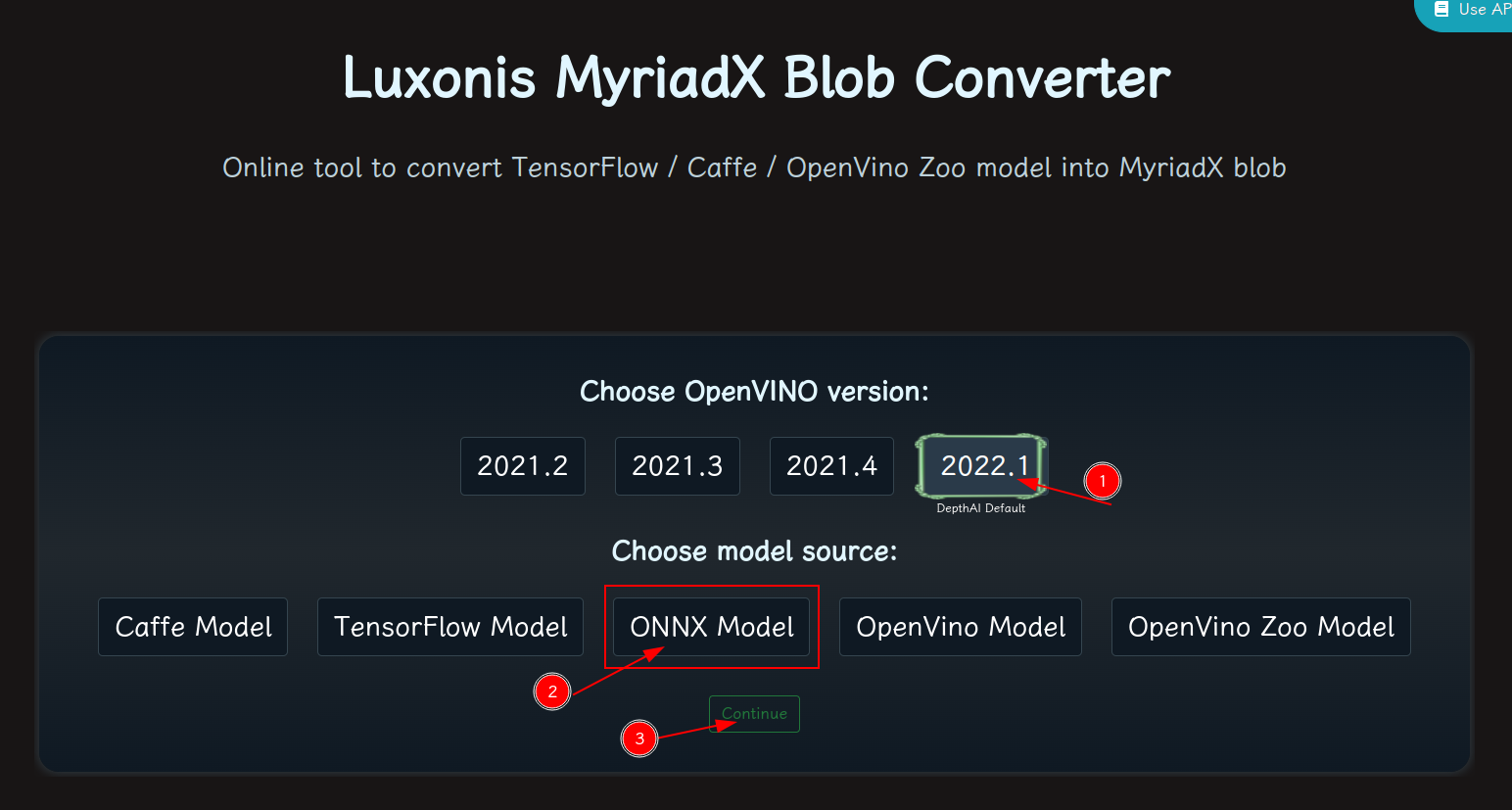

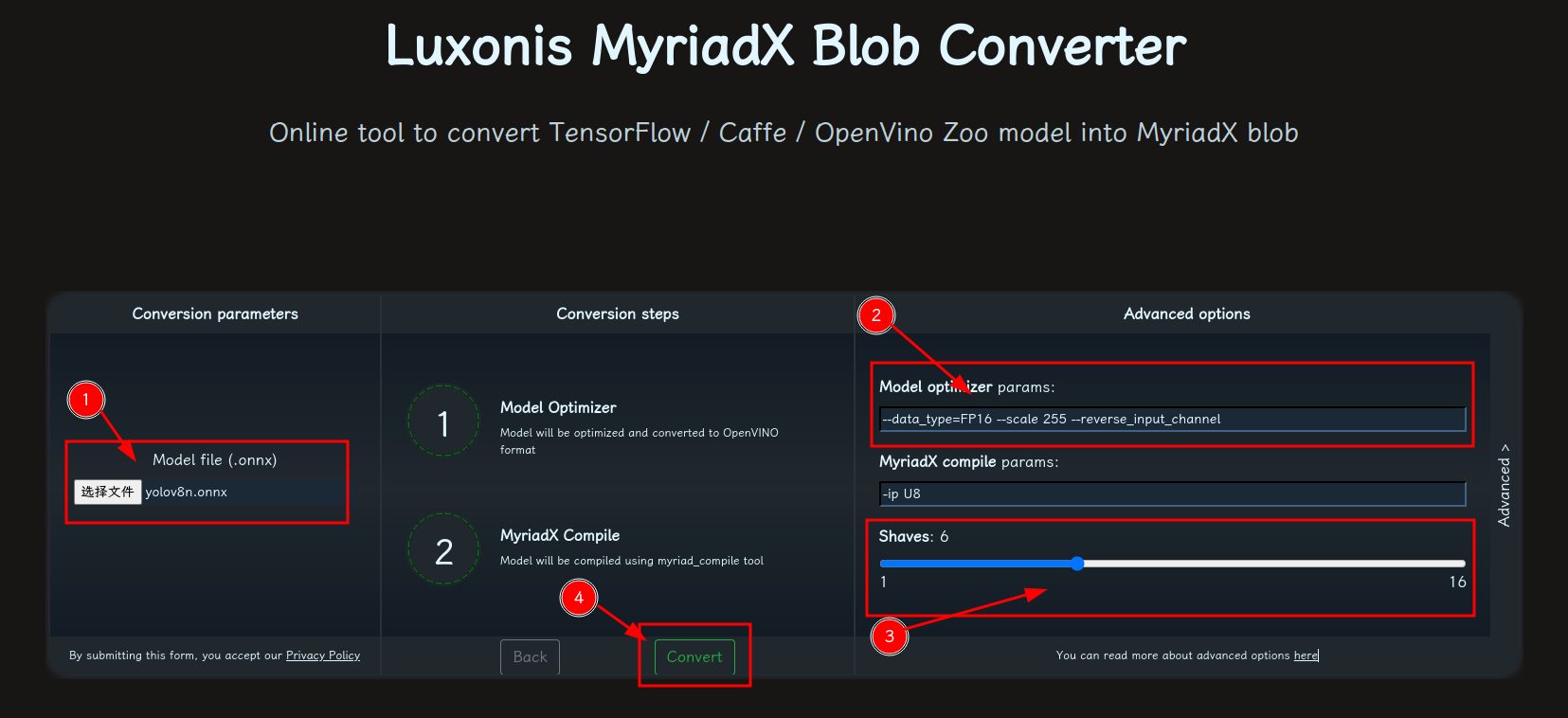

blobconvert 网页 http://blobconverter.luxonis.com/

- 进入网页,按下图指示操作:

- 修改参数,转换模型:

- 选择 onnx 模型

- 修改

optimizer_params为--data_type=FP16 --scale=255 --reverse_input_channel - 修改

shaves为6 - 转换

blobconverter python 代码

blobconverter.from_onnx(

"yolo_nas_s.onnx",

optimizer_params=[

"--scale=255",

"--reverse_input_channel",

],

shaves=6,

)blobconvert cli

blobconverter --onnx yolo_nas_s.onnx -sh 6 -o . --optimizer-params "scale=255 --reverse_input_channel"▌DepthAI 示例

正确解码需要可配置的网络相关参数:

- setNumClasses – YOLO 检测类别的数量

- setIouThreshold – iou 阈值

- setConfidenceThreshold – 置信度阈值,低于该阈值的对象将被过滤掉

# coding=utf-8

import cv2

import depthai as dai

import numpy as np

numClasses = 80

model = dai.OpenVINO.Blob("yolo_nas_s.blob")

dim = next(iter(model.networkInputs.values())).dims

W, H = dim[:2]

output_name, output_tenser = next(iter(model.networkOutputs.items()))

if "yolov6" in output_name:

numClasses = output_tenser.dims[2] - 5

else:

numClasses = output_tenser.dims[2] // 3 - 5

labelMap = [

# "class_1","class_2","..."

"class_%s" % i

for i in range(numClasses)

]

# Create pipeline

pipeline = dai.Pipeline()

# Define sources and outputs

camRgb = pipeline.create(dai.node.ColorCamera)

detectionNetwork = pipeline.create(dai.node.YoloDetectionNetwork)

xoutRgb = pipeline.create(dai.node.XLinkOut)

xoutNN = pipeline.create(dai.node.XLinkOut)

xoutRgb.setStreamName("image")

xoutNN.setStreamName("nn")

# Properties

camRgb.setPreviewSize(W, H)

camRgb.setResolution(dai.ColorCameraProperties.SensorResolution.THE_1080_P)

camRgb.setInterleaved(False)

camRgb.setColorOrder(dai.ColorCameraProperties.ColorOrder.BGR)

# Network specific settings

detectionNetwork.setBlob(model)

detectionNetwork.setConfidenceThreshold(0.5)

# Yolo specific parameters

detectionNetwork.setNumClasses(numClasses)

detectionNetwork.setCoordinateSize(4)

detectionNetwork.setAnchors([])

detectionNetwork.setAnchorMasks({})

detectionNetwork.setIouThreshold(0.5)

# Linking

camRgb.preview.link(detectionNetwork.input)

camRgb.preview.link(xoutRgb.input)

detectionNetwork.out.link(xoutNN.input)

# Connect to device and start pipeline

with dai.Device(pipeline) as device:

# Output queues will be used to get the rgb frames and nn data from the outputs defined above

imageQueue = device.getOutputQueue(name="image", maxSize=4, blocking=False)

detectQueue = device.getOutputQueue(name="nn", maxSize=4, blocking=False)

frame = None

detections = []

# nn data, being the bounding box locations, are in <0..1> range - they need to be normalized with frame width/height

def frameNorm(frame, bbox):

normVals = np.full(len(bbox), frame.shape[0])

normVals[::2] = frame.shape[1]

return (np.clip(np.array(bbox), 0, 1) * normVals).astype(int)

def drawText(frame, text, org, color=(255, 255, 255), thickness=1):

cv2.putText(

frame, text, org, cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 0), thickness + 3, cv2.LINE_AA

)

cv2.putText(

frame, text, org, cv2.FONT_HERSHEY_SIMPLEX, 0.5, color, thickness, cv2.LINE_AA

)

def drawRect(frame, topLeft, bottomRight, color=(255, 255, 255), thickness=1):

cv2.rectangle(frame, topLeft, bottomRight, (0, 0, 0), thickness + 3)

cv2.rectangle(frame, topLeft, bottomRight, color, thickness)

def displayFrame(name, frame):

color = (128, 128, 128)

for detection in detections:

bbox = frameNorm(

frame, (detection.xmin, detection.ymin, detection.xmax, detection.ymax)

)

drawText(

frame=frame,

text=labelMap[detection.label],

org=(bbox[0] + 10, bbox[1] + 20),

)

drawText(

frame=frame,

text=f"{detection.confidence:.2%}",

org=(bbox[0] + 10, bbox[1] + 35),

)

drawRect(

frame=frame,

topLeft=(bbox[0], bbox[1]),

bottomRight=(bbox[2], bbox[3]),

color=color,

)

# Show the frame

cv2.imshow(name, frame)

while True:

imageQueueData = imageQueue.tryGet()

detectQueueData = detectQueue.tryGet()

if imageQueueData is not None:

frame = imageQueueData.getCvFrame()

if detectQueueData is not None:

detections = detectQueueData.detections

if frame is not None:

displayFrame("rgb", frame)

if cv2.waitKey(1) == ord("q"):

break